Which Standards has vCare chosen to use? And Why?

vCare is very much about innovating in the integration of existing mature components. vCare has thus been conducting a systematic analysis of existing standards and privileged as much as possible open-source components. This short article will provide you with a short but complete overview of the choices operated by vCare.

“vCare Smart Coaching Platform” (later referred as “the platform”) is built on top of existing applications and services provided by the different partners developing the solution.

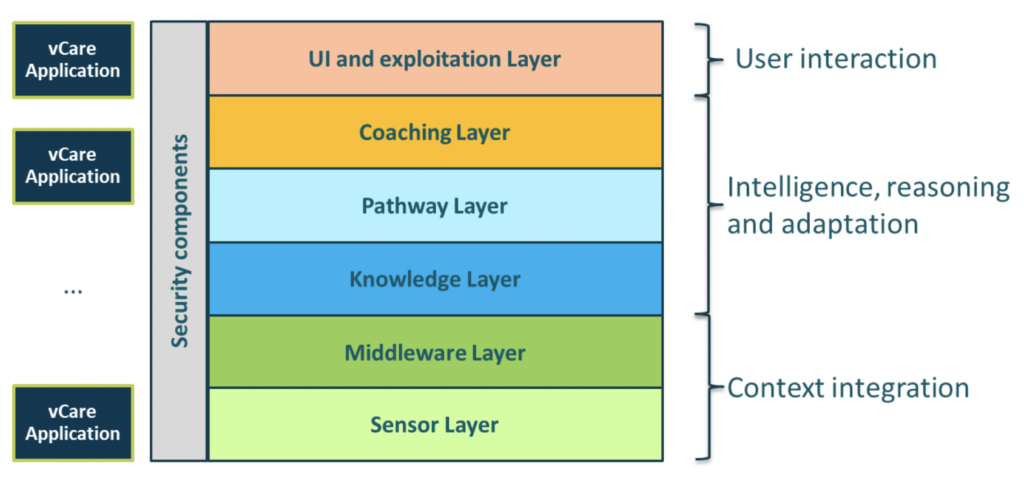

The platform is built around 4 main blocks: 1) context integration of data, 2) intelligence, reasoning and adaptation of coaching services, 3) user interaction and 4) security. The details on these building blocks are depicted in figure 1.

The heterogeneous technical stack behind these applications and services integrated through the seven technical layers required an abstract integration approach based on existing standards to allow seamless integration and openness of the “vCare Smart Coaching Platform”.

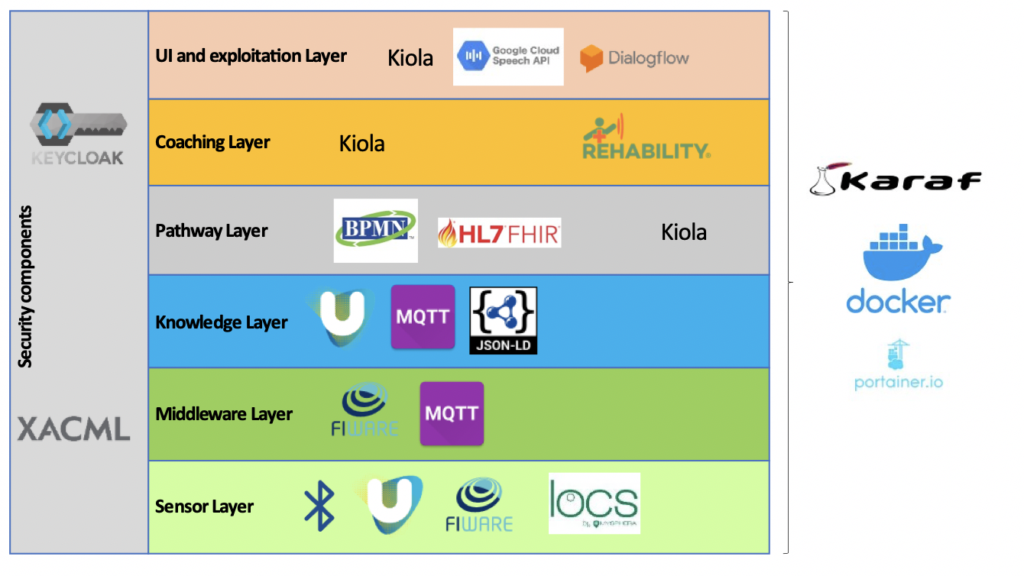

The standard technologies used at each of the layers are shown in figure 2.

Standards at the context integration level

At the bottom of the architecture, we have the connectivity to devices providing context information. Among them, we can find indoor location devices and medical/fitness wearable providing vitals such as heart rate, weight, and blood pressure. These devices have been selected based on the type of connectivity they provide through standard Bluetooth Low Energy[1] profiles. The choice of BLE is based on the support and wide availability of devices providing this connectivity and the possibility to extend the contextual data framework by adding new devices.

At this level we can also find two IoT platforms (universAAL[2] and Fiware[3]); while universAAL is used to obtain context data and run edge processing algorithms, Fiware is used as a device management and remote setup tool.

The most relevant technology at this level is the use of MQTT (Message Queuing Telemetry Transport), a lightweight, publish-subscribe network protocol that transports messages between devices/services[4]. The protocol is an open OASIS standard and an ISO recommendation[5]. In vCare the open-source RabbitMQ[6] broker is used as the MQTT provider.

The contribution of vCare in the integration of MQTT includes the definition of a topic exchange strategy and a standardization of the message contents using Json-LD[7]. This topic strategy is extensible to accommodate new data exchanges in the future.

Standards at the intelligence, reasoning, and adaptation level

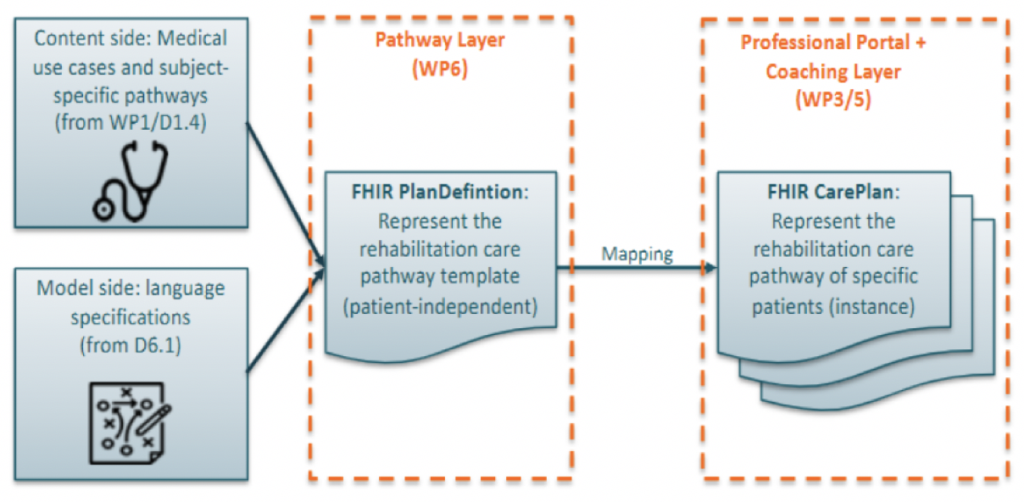

The coaching services provided by vCare are based on clinical pathways built using a graphical tool based on BPMN[8] which later on translates the pathway into FHIR resources[9]. These resources are parsed into Json-LD sematic objects and fused together with the data context and interaction data and used by the reasoning and adaptation components to configure the coaching interactions.

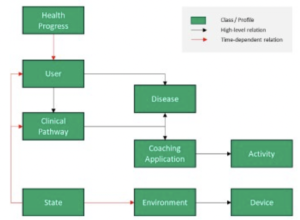

At the semantic level, vCare defined a base ontological model (as shown in figure 3) which is complemented with several other well-known ones: FOAF, SSN, RDF, OWL, SOSA, HL7, etc.

Standards at the security level

At the security level, the selected standard is OAuth2.0 due to its versatility on the different authentication flows it provides the possibility to use single sign-on across applications and services. As OAuth2.0 server, vCare uses the open-source framework Keycloak[10], where specific roles and auth scopes have been defined for the whole vCare Coaching platform.

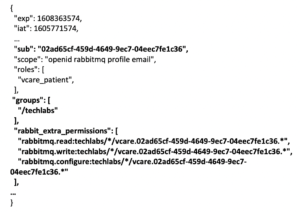

The main contribution of vCare in this regard is the integration of OAuth2.0 into the MQTT message broker and its customization to the topic exchange strategy defined: depending on the app accessing the MQTT ecosystem, the access token issued by the OAuth2.0 server will grant access to certain topics to preserve the final user’s privacy. In figure 4 there is an overview of how OAuth2.0 token claims integrates into vCare platform.

The authentication and authorization are implemented at 2 levels: 1) at group level, meaning that users on one group cannot access data from users on another and, 2) at topic level, meaning that some roles (patient role) can only access its own data, while the doctor role can access data from all patients in a group.

Standards at the DevOps level

The vCare Smart Coaching platform was designed as a decentralized and scalable system. The use of containerization technologies such as Karaf[11] and Docker Swarm[12] empower these concepts. With Karaf, we are able to implement coaching services and deploy them on any Karaf container. With docker we are able to easily create a distributed network topology by adding different physical machines to the swarm and deploy one or more instances of structural services on any of these.

This containerization also allows the logical separation of the system in different groups (as introduced in the security section), what provides a replication of the whole vCare platform for each new potential hospital/client willing to integrate. With this separation vCare enforces user privacy as required by the GDPR[13].

Conclusions

The integration of already existing services and applications into the vCare Smart Coaching platform required a proper strategy to put all the effort on the middleware enabling such integration instead on the modification of legacy systems. This strategy was based on three key challenges:

- A technology independent communication protocol

- A common security framework

- A shared terminology to exchange data

The first challenge was resolved by selecting MQTT as a brokerage technology. This choice was driven by the fact that it is a technology independent protocol and suitable for all types of devices, even devices with low processing capabilities. Additionally, MQTT is an asymmetric technology, putting all the complexity on the broker (central element) and keeping clients simple and light.

The second challenge has been resolved by adding an OAuth2 framework providing security, authentication and single sign-on. The whole vCare Smart Coaching platform uses the same role and user database, simplifying the integration of third-party and/or external services and applications.

Lastly, the third challenge, related to interoperability, has been solved by defining an ontological model to be used for data exchanges, enabling all services and applications connected to the platform to understand the data contents.

[1] https://www.bluetooth.com/specifications/gatt/

[2] https://www.universaal.info/

[4] https://docs.oasis-open.org/mqtt/mqtt/v3.1.1/mqtt-v3.1.1.html

[5] https://www.iso.org/standard/69466.html

[9] https://www.hl7.org/fhir/resourcelist.html

[10] https://www.keycloak.org/

[11] https://karaf.apache.org/